Computational observability: definition and evolution of monitoring

What is observability?

Observability is a concept derived from control theory: a system is said to be observable if its internal state can be deduced from its outputs. Transposed to IT, this means the ability to understand what is happening inside your systems by analyzing what they produce (i.e. logs, metrics, and traces).

But what fundamentally distinguishes observability from traditional monitoring is its ability to answer questions you haven't asked yourself yet. Monitoring monitors what you have decided to monitor in advance while observability allows you to explore unexpected behaviors, to investigate unknown causes.

To take an analogy, monitoring is similar to a car dashboard because it displays predefined indicators (temperature, speed, oil level, etc.) For its part, observability works more like the black box of an airplane: it records everything, to understand any scenario afterwards.

The limits of traditional monitoring

Traditional monitoring is therefore based on the following principle: you define what you want to monitor, you set thresholds, you receive alerts. This approach works very well on stable and predictable systems but becomes insufficient as soon as the architecture becomes more complex.

Let's take a typical incident: your application is slow. Your monitoring tells you that a server's CPU is high. However, he doesn't tell you why : neither the request in question, nor the user (s) impacted, nor the saturated downstream dependency. So you spend hours looking for this information.

These shortcomings stem from structural limitations:

- Vision in silos : infra monitoring, application monitoring and network monitoring are managed separately, without correlation.

- Responsive approach : Alerts come after the problem has affected users.

- Built for the predictable : here it is impossible to monitor what we did not anticipate.

- High MTTR (Mean Time To Resolve) : most of the time is spent on diagnosis, not resolution.

In fact, classical monitoring is adapted to the monolithic architectures of the 2000s but was not designed for the distributed cloud architectures that are in use today.

Why observability is critical in hybrid and cloud-native IS

In a modern hybrid IS, a user request will likely cross, for example, an on-premise application, an Azure API gateway, three containerized microservices, a managed database, and a third-party authentication service. All in a few hundred milliseconds.

In this context, complexity is now the norm: resources are ephemeral (containers that appear and disappear according to auto-scaling), deployments are frequent (up to several releases per day in the most mature organizations), and dependencies are multiple. Therefore, without observability, flying this type of IS is the same as driving blindfolded.

Business challenges are significant: every minute of unavailability has a measurable cost, and a deterioration in perceived performance is enough to make a customer journey abandon. Users no longer tolerate slowness and IT teams are under pressure to ensure increasingly stringent SLAs in an increasingly complex environment.

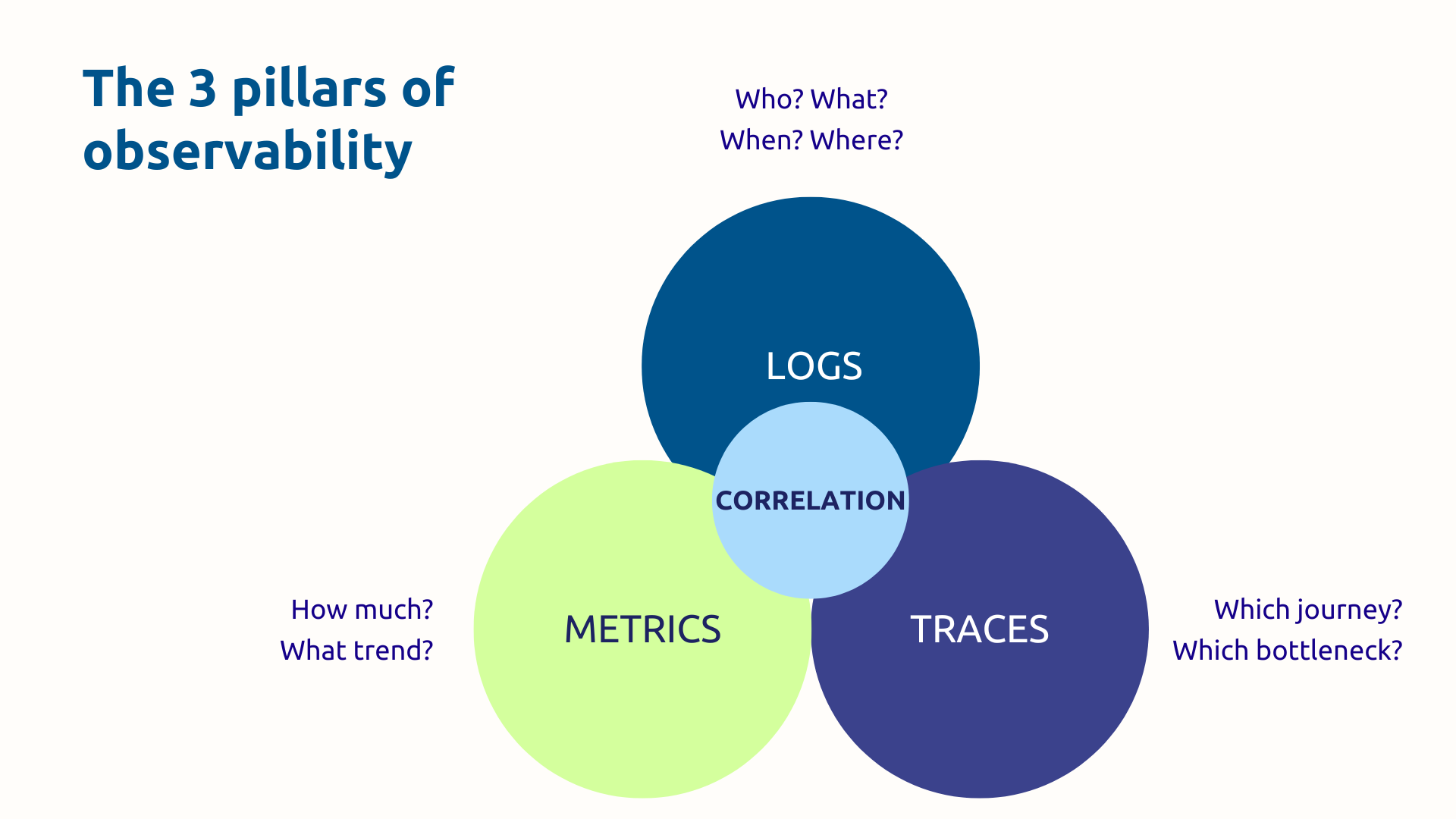

The 3 pillars of observability

Pillar 1 — Logs (journals)

Logs are time-stamped records of everything that happens in your systems: errors, transactions, user actions, status changes. They are the ones who answer the questions.”Who? What? When? Where?”

A structured log (at JSON format) is much more usable than a free text log: it can be indexed, filtered and correlated automatically. Thus, once centralized in a single space, logs from all your sources (applications, infrastructure, security, audit) become a highly effective investigative tool.

Even though volumes can be considerable, storage costs add up, and you need to distinguish signal from noise, as long as your logs are properly configured, you will be able to trace exactly what happened during an incident. For example, every step of an e-commerce transaction, from adding to the cart to confirming payment.

Pillar 2 — Metrics

Metrics are numerical measurements performed over a given period of time: CPU usage, latency, error rate, number of requests per second. They answer the question “How much? ” and allow you to visualize the overall health of your systems.

The main advantages of metrics are low storage costs and efficient aggregation capacity. Thanks to all this, a 24-hour latency graph immediately reveals an anomalous peak during peak hours. In addition, alerts based on thresholds (or better, on the detection of anomalies) allow for rapid reaction.

On the boundary side, metrics lose granularity and lack context. Indeed, an isolated metric tells you that something is happening, but rarely why. This is where the third pillar comes in.

Pillar 3 — Distributed traces

Distributed tracing makes it possible to follow the full path of a request through all the services of your architecture. At each stage (called span), the duration and metadata of the call are recorded. All the spans constitute a complete trace, visualized in the form of a waterfall diagram.

Imagine an online purchase that links the query sequence 15 times: catalog → basket → stock check → payment → notification. Thanks to the trace you know that the payment service responds in 1.8 seconds while all the others respond in less than 50 milliseconds. You have thus identified the bottleneck in a few clicks.

In this regard, the OpenTelemetry standard has established itself as the reference for instrumentation applications in a portable and interoperable way. It is supported natively in Azure, including via Azure Monitor and OpenTelemetry compatible SDKs.

The power of the correlation of the 3 pillars

Taken separately, logs, metrics, and traces are already useful tools. When correlated, they become powerful.

To illustrate, if we consider the following typical workflow:

- A metric triggers an alert (high latency).

- The traces identify the department responsible.

- The logs provide the precise context — what request, what user, what exact error.

Thus, hours of manual diagnosis can be reduced to a few minutes of targeted investigation.

However, this correlation is impossible with siloted tools because it requires a unified platform that circulates and connects the three types of data. That is precisely what the Azure ecosystem offers.

The Azure ecosystem for observability

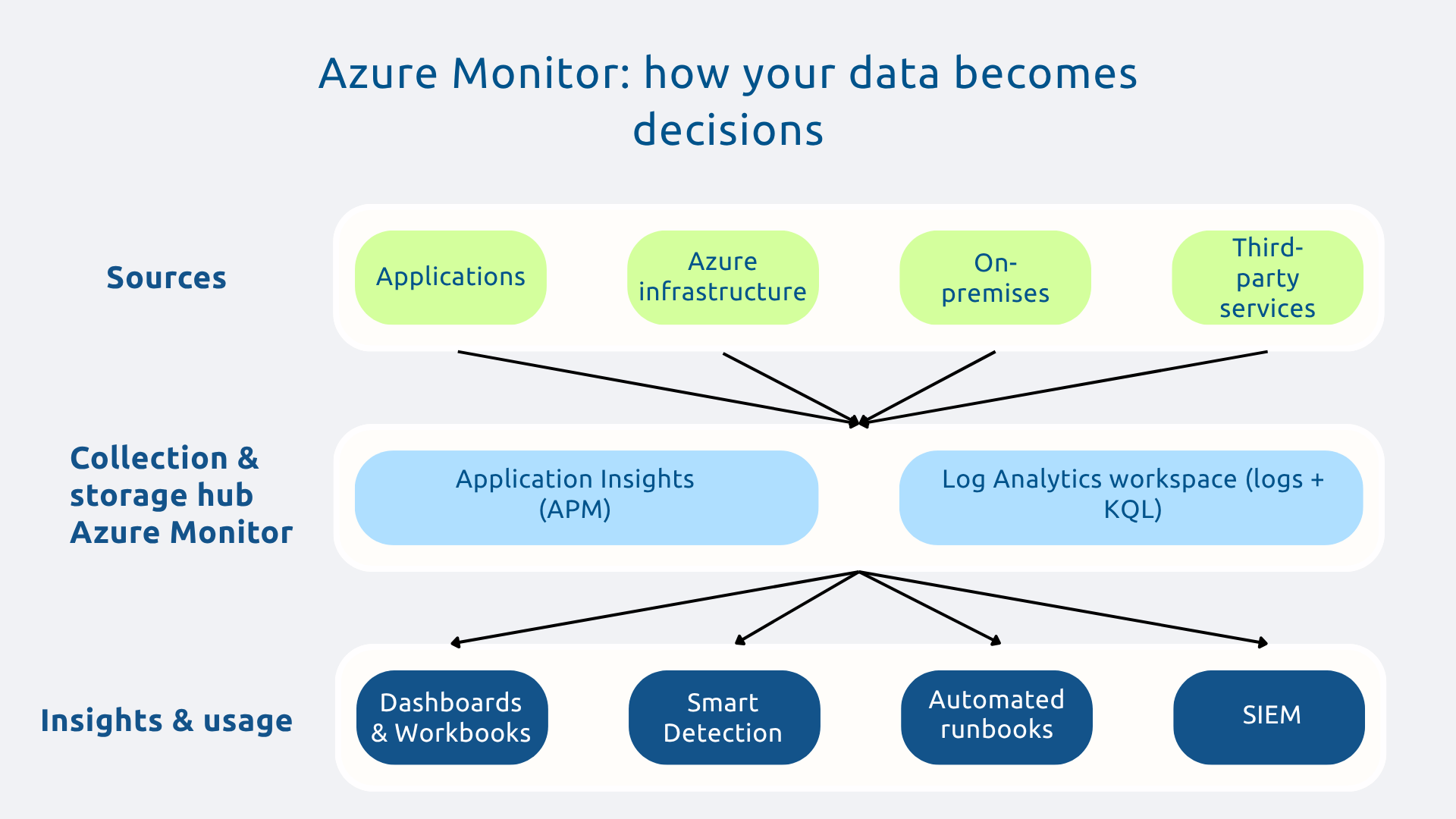

Azure Monitor: the unified observability platform

Azure Monitor is the central hub of any observability strategy on Azure. Indeed, it collects, aggregates, and analyzes telemetry data from across your environment: native Azure resources, applications, on-premise infrastructure via Azure Arc, and even other clouds.

All data converges into a centralized storage space (Log Analytics workspace), from where you can trigger alerts, create interactive dashboards (workbooks), configure autoscaling, and generate reports. The integration is native with all Azure services, which significantly reduces the instrumentation load.

Application Insights: advanced application observability

Application Insights is the solution for APM (Application Performance Monitoring) from Microsoft. It offers complete visibility on the behavior of your applications: latency, error rates, dependencies, exceptions, slow requests.

Its flagship feature, the Application Map, automatically generates a visual map of your microservices architecture and all dependencies, with associated response times. At a glance you can see where are the bottlenecks and what components are in trouble.

Additionally, Application Insights supports a variety of languages, including.NET, Java, Node.js, Python and integrates natively with Visual Studio and Azure DevOps. It also includes analytics on user behavior (sessions, journeys, page views) to correlate technical performance with real user experience.

Log Analytics: query and analyze on a large scale

Log Analytics is the query engine that turns terabytes of raw data into actionable insights. All your logs, regardless of their source, are centralized in a single workspace, searchable via the KQL language (Kusto Query Language).

Thanks to the latter, in a few lines, you can find all the 500 errors of the last 24 hours, distribute them by service, calculate their correlations with traffic peaks. What's more, queries can be saved, transformed into automatic alerts or integrated into dashboards.

In addition, data retention is configurable according to your regulatory and operational needs. Finally, Log Analytics also integrates with Microsoft Sentinel for security use cases (SIEM).

Additional Azure ecosystem tools

Azure Monitor and its components don't work alone. The ecosystem is enriched with several complementary tools that cover all observability needs:

- Azure Service Health : Real-time alerts on incidents affecting the Azure services you depend on.

- Azure Advisor : proactive recommendations on the performance, costs and security of your environment.

- Network Watcher : monitoring and diagnosis of the Azure network (connectivity, flow, latency).

- Grafana managed : advanced visualization with native Azure integration, for custom dashboards.

- Prometheus managed : collecting metrics for Kubernetes workloads (AKS).

The advantage of this suite is that it is integrated by design : no need to assemble disparate third-party solutions and manage integration frictions because Azure offers a cohesive ecosystem that avoids tool silos as well as the Shadow IT.

Methodology: building your observability strategy

Step 1 — Define goals and SLIs/SLOs

Before you instrument anything, ask yourself the essential question: What is it that really matters for your business?

The answer should be formulated in:

- SLI (Service Level Indicators), metrics that objectively measure the performance of your services (availability, latency, error rate),

- SLO (Service Level Objectives) the targets to be reached for each of these indicators (e.g.: latency less than 300 ms for 99% of requests).

SLI and SLO should be aligned with critical user journeys and business priorities. This framework, inspired by Google SRE practices, ensures that observability generates actionable insights rather than a torrent of meaningless data.

Step 2 — Instrumenting applications and infrastructure

The instrumentation is the indispensable initial investment because the value of observability that you will get depends on its quality.

The Application Insights SDKs and OpenTelemetry libraries make it possible to implement distributed tracing, metric collection, and structured logs with minimal code.

Azure Monitor agents automatically collect data from VMs, containers, and managed services.

We recommend perform this step gradually : start with the most critical services for the business, validate the results, then extend. Standardize instrumentation practices so that all teams produce consistent and correlatable data.

Step 3 — Centralize and correlate data

Centralization consists in transforming a collection of disparate monitoring tools into a true observability platform. To do this, set up a Log Analytics single workspace as a point of convergence from all of your data sources.

The correlation is based on the propagation of trace identifiers (trace IDs) across all service calls. When an ID is shared between the logs, metrics, and traces of the same request, navigation between the three pillars becomes fluid and instantaneous.

The Application Map is then automatically generated from Application Insights, giving you a real-time visualization of your architecture and its dependencies.

Step 4 — Create dashboards and smart alerts

A good dashboard should guide you to the essentials. Also, design differentiated views according to audiences:

- strategic summary for CODIR;

- operational indicators for teams;

- detailed view for on-call SREs.

As a reminder, alerts should be based on your SLOs. Note that Azure Monitor offers intelligent detection capabilities to identify anomalous behavior without the need to manually configure thresholds. In addition, by coupling these alerts to automated runbooks, we allow the system to initiate remediation without human intervention on the most common cases.

As a result, fatigue alerts are reduced by notifying teams only for events that really require action.

Step 5 — Establish a culture of observability

Observability is as much a cultural as it is a technical transformation.

This means assigning clear ownership: each team is responsible for the observability of its own services. Likewise, incident post-mortems become learning exercises that are documented and shared. Thirdly, instrumentation should be part of the definition of “done” in development sprints, it is the principle of Shift-left observability.

Finally, the value is measured: MTTR (average resolution time), MTTD (average detection time), availability rate. These KPIs make it possible to concretely demonstrate the return on investment of the approach and to maintain adherence over time.

The three pillars intertwined in a unified platform provide the end-to-end visibility that modern architectures require. However, technology is not enough: you need a clear strategy, rigorous instrumentation, and an aligned team culture.

Indeed, as IS becomes more complex, organizations that master observability will have a decisive advantage: the ability to guarantee the user experience, reduce incident costs, and innovate faster, with confidence.

Is your current monitoring strategy sufficient to manage your hybrid IS? Request your audit from Askware to identify your blind spots and build your road map to complete observability.